Smart Watches, Dolphins and Evolution

Watches seem to be a bad fit in the modern world where time is all around us. Glance at your phone or your tablet, and there’s a clock. Glance at your computer screen, TV, car dashboard or digital camera, and there’s a clock. Fish your Fitbit out of your pocket, and it has a clock. Today, keeping time is so cheap that even your oven has its own clock.

It wasn’t always so; 500 years ago, the clocks might have only been on clock towers. 250 years ago, they came to our living rooms. Then, to your grandfather’s pocket. And then, in an endless feat of miniaturization, they came to your wrist.

However, in today’s world of Apple iPhones and Google Glass, the uni-tasking device on your wrist has been reduced to a fashion statement and/or a status symbol. But you don’t really need it.

At least, I don’t.

What would come in handy, though, is something else — an external screen for my phone. Something to display text messages, incoming calls, e-mails, weather info, and all the things the phone can think of, so that I don’t have to reach for it every time it blips.

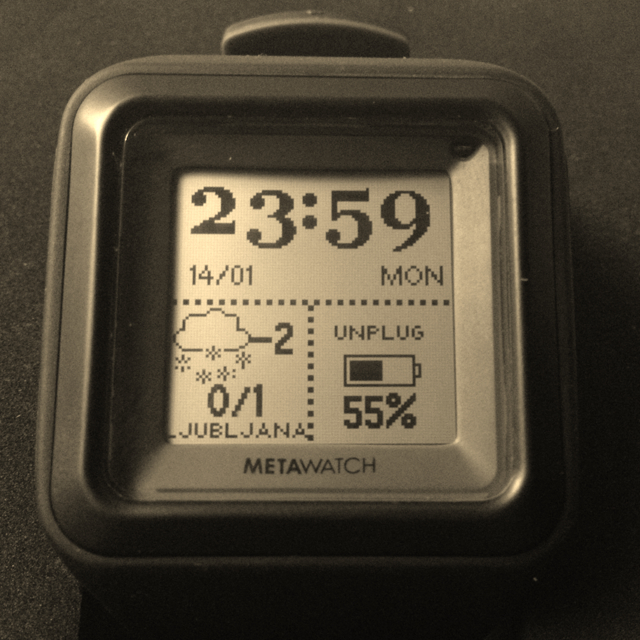

That’s why I’ve been testing a smart watch (Metawatch Strata, more below). It pairs to your phone using Bluetooth Low Energy and does all that, with hopefully more to come.

A quick review

Here’s some of the features:

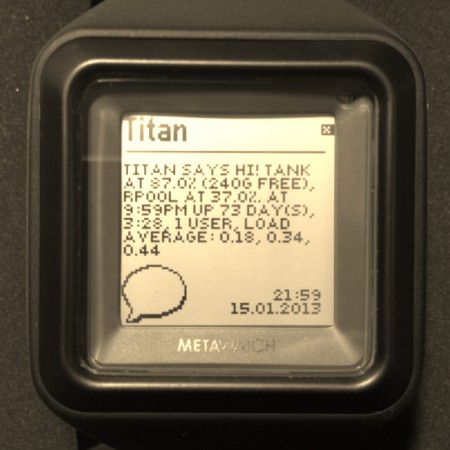

- It shows texts; see below for notification from one of my servers reporting some stats.1

- Shows events from calendars synced to your iPhone; this includes Gmail, Exchange, and surprisingly, FB birthdays

- Displays weather forecast, which is of course location-sensitive; the phone already knows your location, so weather can always be local

- Shows stocks and phone battery level

- Displays incoming calls, which you can also reject by pushing a button

- Has media player controls,

- Features a vibrating motor to alert you to a call, text or a calendar event,

- as well as a 3 axis accelerometer and an ambient light sensor, both unused in the current firmware.

All this works by pairing the watch to your iPhone (Android is supported, but currently lacks many features). In fact, you have to pair it as a Bluetooth 2.1 device for displaying texts and incoming calls (as you would your Bluetooth car kit), and as a Bluetooth 4.0 device for everything else.

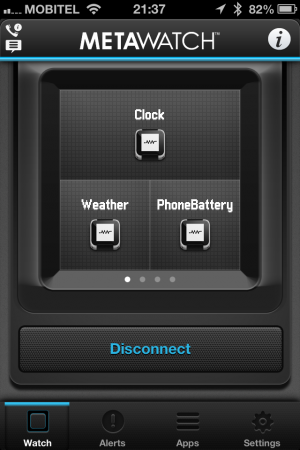

Once paired, you run the app (MetaWatch Manager) that manages the widgets on the watch. You get 4 screens and you can place on them anything from 4 small widgets to 1 large widget occupying the entire 2×2 grid.

The software is supposed to be open source, and there’s plenty of projects doing more with it. Haven’t looked into it yet.

One of the more peculiar things is its display, which is nothing I’ve ever seen before. It’s a Sharp low-power memory LCD (video) with 96×96 pixel resolution, but there’s no black or white pixels; the only two states are mirror-reflective and white. This takes some getting used to, so be sure to check if you like it before buying. All photos are cleverly set up to reflect only black, so the effect is not obvious unless you check it in a video. On the bright side, it requires almost no energy when it’s not updating (similarly as e-ink), so the battery lasts for about a week.

So, is this wearable computing?

MetaWatch is of course not alone; there’s Pebble, which is just about to ship, with similar features. Pebble uses an e-ink display, so the battery life is reportedly similar (around a week). There’s also the Italian i’m Watch, which is entirely Android powered and uses an active display (hence the battery only lasts for about a day).

It’s quite obvious something is going on in that space, and that a new computing form-factor is emerging.

And if you think back, the exact same thing has already happened before: computers started out as large mainframes, where all the computing power was centralized. They were accessed only by a dumb terminal, which was little more than a remote keyboard and screen. Yet, gradually, technological progress killed the mainframe and made the dumb terminal the new protagonist. It became smart enough to survive by itself, sitting under your desk, then on your lap, and finally in your pocket. The smart phone in your pocket is indeed more powerful than your desktop computer was 10 years ago.

And yet again, there are new dumb terminals to take place of the old. Smart watch is nothing but a dumb terminal for the mainframe in your pocket. Right now it can’t do much more than display what the mainframe has to say. But it might not be long before you won’t need the mainframe anymore.

The meandering path of evolution

I’ve been thinking lately how all this relates to biology. Watches seem to have had a similar evolutionary path as dolphins.

You see, first there were fish (bear with me). Gradually, some fish got tired of water, became mammals, grew lungs and legs and came to land. They evolved further, and eventually they became us. But something else also happened: a group of mammals got tired of land and went back to water. Dolphins are a part of that group, and so are whales. They might swim like fish, but they have lungs and have to come to the surface for air. And funnily, today they mostly hang out with other fish (which have always been fish) and get confused with them by almost everybody.

Compare that to watches. A clock, as an ideal of craftsmanship, the ultimate precision mechanism, gradually evolves into a mechanical Babbage machine that fills an entire room. Later, that becomes a computer, and the computer gets smaller and smaller, until it becomes wearable, and finally migrates to your wrist. There, it hangs out with the old mechanical relics, only to be confused with them for years to come.

- I’m using Nexmo to deliver those, costing me 1 cent per message [↩]

Reply

You must be logged in to post a comment.